LiDAR Camera Synchronization

March 18, 2026

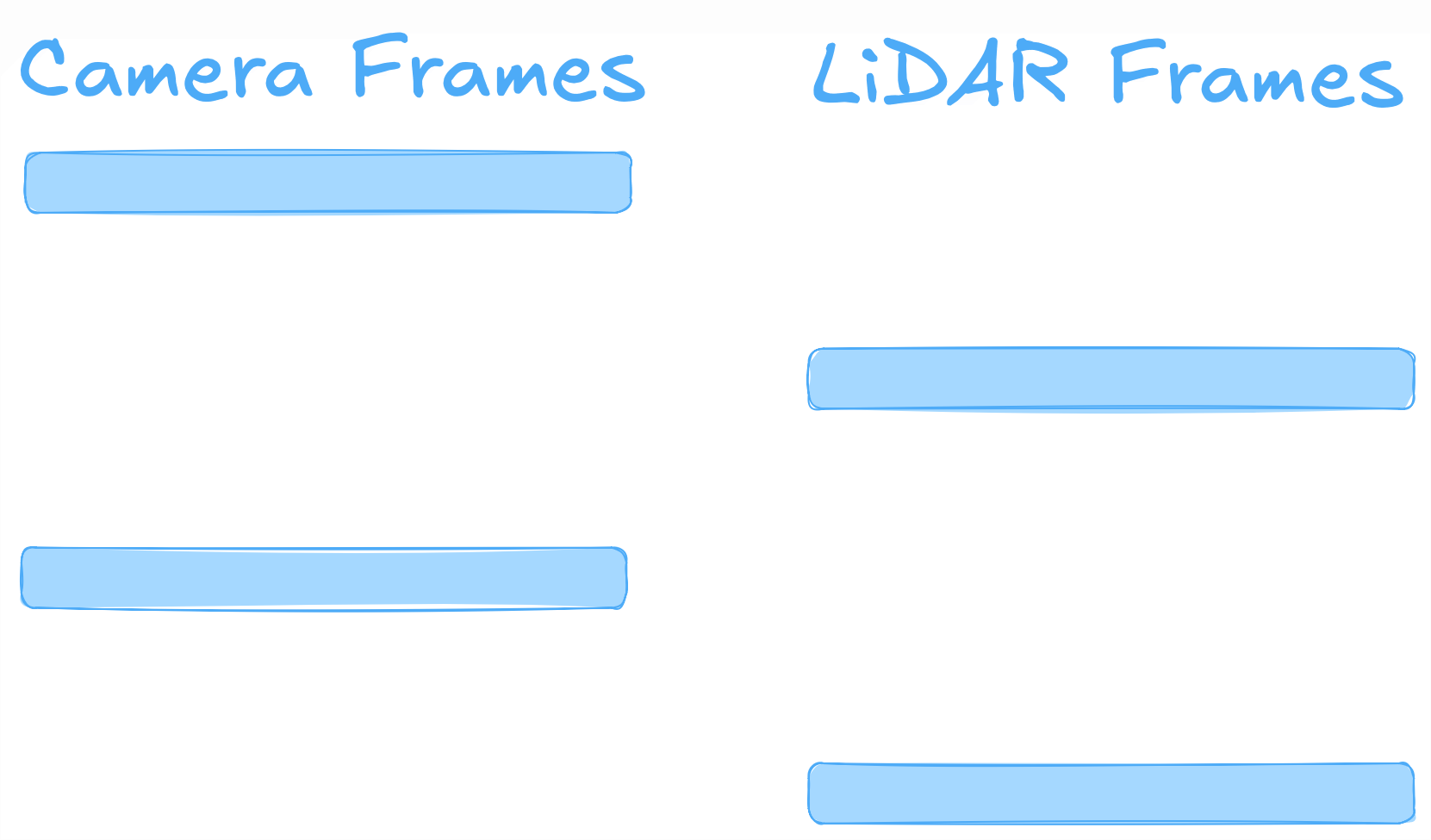

I have been working on the synchronization of LiDAR and camera of our driverless car for our Carnegie Mellon Racing team. Starting this Fall, our team analyzed the previous systems of the driverless car and found that LiDAR and camera frames were not being taken at the same time. Instead, we used motion modeling to extrapolate camera positions such that the LiDAR and camera frames could be matched. Delays could be upwards of 30ms, greatly diminishing the accuracy of our perceptions timeline.

The goal of our project was to synchronize LiDAR and camera frames such that the LiDAR and cameras were taking frames at the same time, ultimately increasing the robustness of perceptions timeline which would result in improved feature matching and object detection. We switched the time stamping system to be entirely hardware based using voltage spikes to capture frames.

I led the compute side frame and timestamp handling. This involved receiving frames, calculating a delay, and sending the delay over the CAN bus protocol using C++ and ROS2. Additionally, I had to deploy and manage a thread to handle individual bits from CAN messages as well as using thread-safe practices. This entire process involved comprehensive design reviews and code reviews, ensuring the code I wrote was safe and clean.

The project should be deployed in later Formula SAE competitions. Feel free to ask me more about the project. I’m unable to post it publicly since our team has not competed yet.